If your application is experiencing load problems, time to bring out the champagne! Your web-app must be pretty successful to get to this stage. You’ve reached the capacity number of users that your application is able to handle, things are beginning to slow down and error out. Network requests start timing out, database queries are taking a while to execute, pages are loading slowly. Congratulations — your app is ready to scale! Time to put that champagne down though… You need to handle these growing pains before your users leave your app for that annoying competitor copying your idea.

The Costs Of Scaling

Before you start vertically, horizontally and inside-out* sharding your database, an important principle should be kept in mind. You shouldn’t implement premature optimisations or attempt to scale your app before it’s actually needed. Implementing scaling solutions introduces the following complexities:

- Adding new features takes longer

- The system becomes more complex with more pieces and variables involved

- Code can be more difficult to test

- Finding and resolving bugs becomes harder

You should only accept these trade-offs if your app is at capacity. Keep the system simple, don’t introduce scaling complexities unless it’s warranted.

*inside-out database sharding is not a real solution. The point is there is a spectrum of scaling solutions, don’t implement them unless you need to!

Finding Bottlenecks Using Metrics

Every application/system is different, to determine which scaling solution to implement you must first determine where the bottleneck is. Time to check your resource monitoring system or create one if you haven’t already. No matter the stack you are working with there will be tools available for monitoring your resources. If you’re running on any of the leading IaaS (Infrastructure as a Service) providers such as AWS, Microsoft Azure and GCP, there are great application performance management tools out of the box to choose from.

These tools illustrate the performance of your resources through graphs and other data visualisation methods. Use these graphs to look for spikes or flat tops. These commonly imply that a resource was overwhelmed or at capacity and was not able to handle new operations. If nothing is apparently at capacity, but your app seems to be running slow, try sprinkling logs throughout heavily used operations. Check the logs for resources that take a long time to load over the network, there could be another server such as a third-party API or your database server introducing delays. You should be hosting your database on another server, if that’s the case then you should also check your resource monitoring for that machine.

By thinking about how your application is used by your users and thinking logically about the errors or cracks beginning to show, determining where the bottleneck is can be pretty straight forward. Let’s take Twitter for example, this particular platform is mostly used to read and write tweets. If Twitter’s monitoring services indicated a heavy load on their databases relating to these actions, it would make sense for their team to start optimising that area of the platform. In this article, we will be diving into database scaling solutions, which is usually the first point of failure. If you aren’t already familiar with systems design, I have a brief article that will introduce you to the topic. I recommend getting an understanding of systems design before implementing scaling solutions.

Scaling an App From a Birdseye View

Now that we’ve got a good sense of what/where the problems/bottlenecks are, we can start implementing solutions to address these issues. Remember, simplicity is key, we want to always try to avoid introducing unnecessary complexities.

The high-level goal of scaling solutions is to have the stack do less work for the application’s most common requests or effectively distribute the workload that can’t be eliminated across multiple resources. The way that scaling techniques do this usually translates into one or more of the following:

- Reusing data the app has already looked up

- Eliminating requests from the client for data the app already possesses

- Storing results of common operations in order to reduce repeating computations

- Avoiding complex operations in the request-response cycle

Many scaling techniques boil down to some form of caching. In the past, memory was expensive and scarce; nowadays it’s inexpensive to add it to servers. Memory is many orders of magnitude faster to access data compared to disk or the network; in this era where users have a plethora of choices, coupled with our minimal attention span, speed and performance are paramount to your application’s survival.

Database Scaling Solutions

Cache Database Queries

Caching database queries is one of the simplest improvements you can make to handle database load. Usually an application will have a handful of queries that make up the majority of the requests made. Rather than making a round trip over the network each time for that data, it can simply be cached in memory on the webserver. The first request will fetch data from the database and cache the results on the server, future requests just read from the cache. This results in increased performance as the data spends less time traveling through the network and is closer to the client. It also results in more of the database server’s resources being available as significant workload is distributed to the cache system. As well as increased availability, if the database is unavailable, the cache can still provide continuous service to the application, making the system more resilient to failures. There are a lot of tools that you can use to run an analysis on database query logs, so you can see which queries are taking the longest to complete and which queries are run most frequently.

Obviously, data that’s cached can become ‘stale’ or out of date quite quickly. You will have to be mindful of which data you chose to cache and how long for. For example, an online newspaper would have a new daily newspaper every 24 hours, rather than requesting that data from the database every time a user hits the site, they can cache that data on the webserver for 24 hours and serve it straight from the server. Product or business requirements will dictate what can and can’t be cached.

Database Indexes

Database indexing is a technique that improves the speed of data retrieval operations on a database table. Indexes are used to quickly locate data without having to search every row in a table every time the table is accessed. Usually, the data structure for a database index will be a binary search tree. This allows the time complexity of accessing the data to be lowered from linear time O(n) to logarithmic time Olog(n).

Depending on the number of rows in a table this can save significant amounts of time off queries that use the indexed column. For example, if you had 10,000 users and your application has profile pages that look up a user by their username, an un-indexed query would examine every single row in the users table until it finds the profile that matches the username passed into the query. That could take up to 10,000 row examinations O(n). By creating an index for the “username” column, the database could pull out that row under logarithmic time complexity (Olog(n)). In this case, the maximum number of row examinations would be 14 instead of 10,000!

Effective indexing reduces the load on the database by increasing efficiency, this also provides significant performance boosts leading to better user experience. Creating an index does add another set of data to be stored on the database, so careful judgment must be exercised when deciding what fields to index. Even with the existing storage space used, indexing tends to be well worth it, especially in modern-day development where memory is cheap and performance is integral to survival.

Time complexity and data structures were mentioned a bit in this section, but not thoroughly explained. If you’re interested in learning about or want to brush up on your understanding of time complexities and data structures, the articles linked above are fantastic resources!

Session Storage

A lot of applications handle sessions by storing a session ID in a cookie, then storing the actual data for the key/value pair of every session in a table on the database. This can become a tremendous amount of reading and writing to your database. If your database is getting overwhelmed with session data, it’d be a good idea to rethink how and where you’re storing that data.

Moving session data to an in-memory caching tool like redis or memcached can be a good option. This will remove the load of the session data from your database and also increase the speed of access since in-memory is faster than persistent disk storage which most databases use. However, since in-memory is volatile memory, there is a risk of losing all of the session data if the caching system goes offline.

You could also consider changing your session implementation to storing the session information in the cookie itself, which will move your means of maintaining session state off the server and onto the client instead. JWT’s are the most popular implementation for this pattern. This will alleviate your database of all the session data and remove the dependency of server-side sessions, although it introduces its own sets of challenges.

Master Slave Replication

If your database is still under too much load from reads even after caching common queries, creating efficient indexes, and handling session storage, replication may be the next best stone to turn.

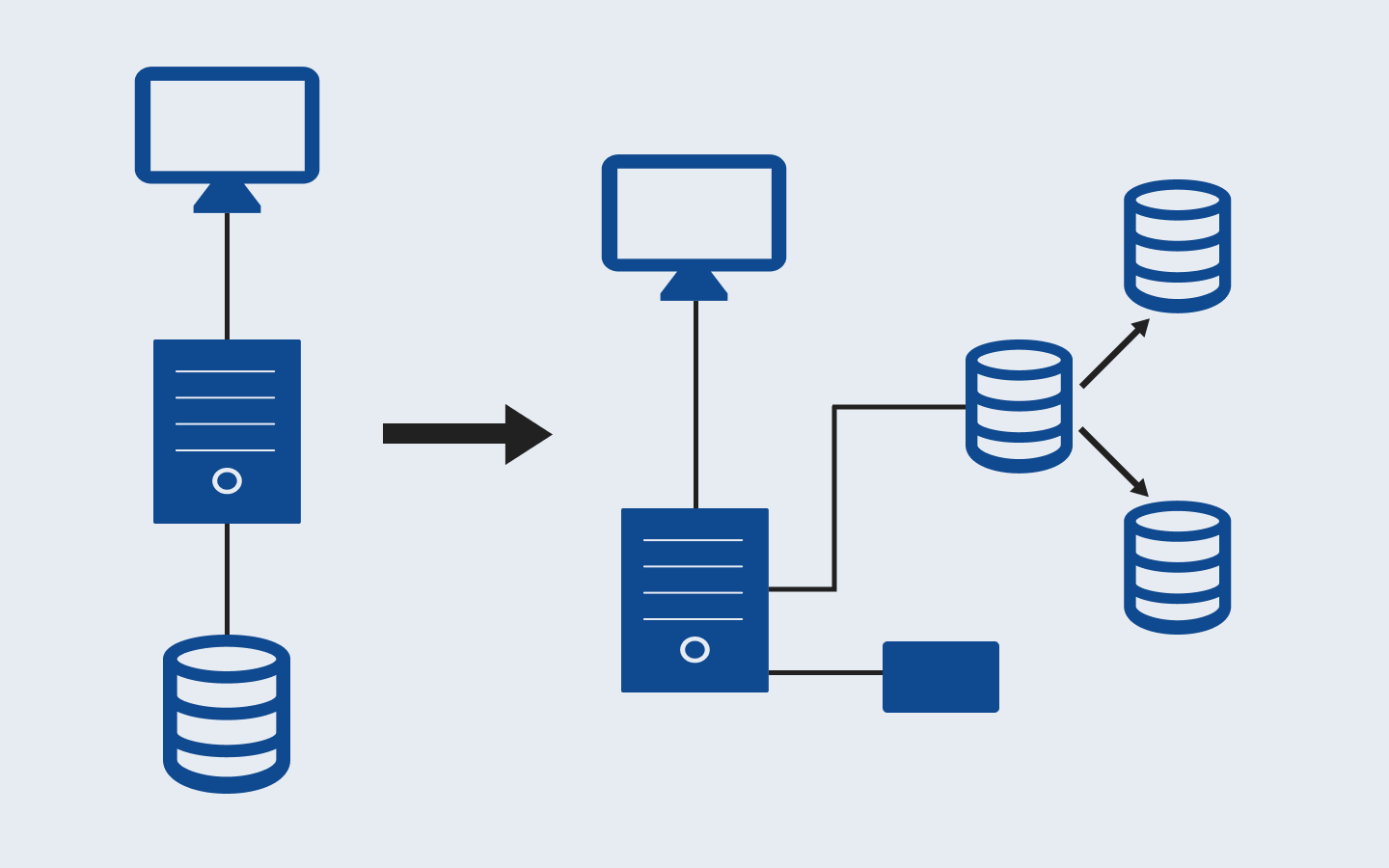

With master-slave replication, you have a single database that you write to. It is cloned into several (as many as you need) slave databases that you read from, with each slave database sitting on another machine* (refer to the diagram below). This takes the reading load off the master database and distributes it across multiple servers. This model also improves the performance of writes as the master database is dedicated to writes, whilst dramatically increasing read speed and reducing latency as slave databases are spread across different regions.

Since each slave database is on another machine, writes to the master database need to propagate through to the slaves which can lead to inconsistent data. If you need to immediately read the data written to the database, say you are updating a profile and want it rendered immediately, you can opt to read from the master database. Slave-master replication is an incredibly powerful scaling solution, but it comes with its fair share of complexities. It’d be wise to implement this solution after exhausting simpler solutions and ensuring effective optimisations within the application.

*This architectural pattern is more commonly known as master-slave replication, but it’s a term that has received criticism over the years and is in the process of being replaced throughout the tech community.

Database Sharding

Most of these scaling solutions so far have focused on reducing load through managing the reads to the database. Database sharding is a horizontal scaling solution to manage load by managing reads and writes to the database. It’s an architectural pattern involving a process of splitting up (partitioning) the master database into multiple databases (shards), which are faster and easier to manage.

There are two types of database sharding techniques — vertical sharding and horizontal sharding (refer to the diagrams below). With horizontal partitioning, tables are taken and put on different machines with each table having identical columns, but distinct rows. Vertical partitioning is more complex, involving splitting one table across multiple machines. A table is separated out and put into new, distinct tables. The data held in one vertical partition is independent of the data in all others, each table holds both distinct rows and columns.

Both sharding techniques facilitate horizontal scaling, also known as ‘scaling out’, which enables the practice of adding more machines to a system in order to distribute/spread the load. Horizontal scaling is often contrasted with vertical scaling, also known as ‘scaling up’, which involves upgrading the hardware of an existing server. Scaling up for a database is relatively simple, although any non-distributed database will have its limits in terms of computing power and storage, so having the freedom to scale out makes your system far more flexible.

A sharded database architecture can also significantly increase the speed of your application’s queries, as well as providing increased resilience to failures. When submitting a query on an unsharded database, it may have to search every row in the table which can be prohibitively slow. Alternatively, by sharding one table into multiple tables, queries have to go over much fewer records to return results. Since each of those tables is on a separate server, the impact of a server becoming unavailable is mitigated. With a sharded database, the impact of an outage will likely affect only a single shard, compared with an unsharded database where an outage has the potential to make an entire application unavailable.

Having a sharded database architecture provides some pretty massive benefits, however, it is complex and has a high implementation and maintenance cost. This is definitely an option you’d want to consider after exhausting other scaling solutions as the ramifications of ineffective implementation can be quite severe.

Conclusion

Congratulations, your app has now got the appropriate solutions in place to effectively handle database load and scale with your app’s success! Not quite time to celebrate just yet though… An effectively scaled server is integral to a performant and reliable application. In the near future, I will be releasing an article covering server and client scaling solutions 🚀